Welcome back to another episode of How-To-What-Is! The iPhone 12 series has just been launched and some of the biggest upgrades come with the camera. As Ray mentioned, the most exciting device here—with regards to new camera features—is the iPhone 12 Pro Max. ProRAW, fancy stabilisation tech, and a larger sensor all sound very interesting—but what does all of that even mean?

Today’s episode should help you understand what those technical terms actually mean.

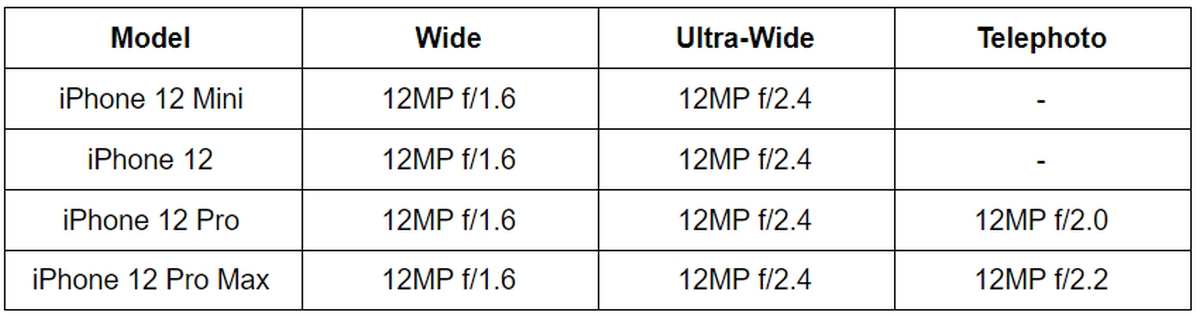

Camera specs

iPhone 12 Pro Max – 2.5X

Apple Pro Raw (not available yet)

ProRaw is Apple’s version of the RAW image format, which offers more control when you’re editing the image in post. The image file contains unprocessed or minimally processed data from a digital camera’s sensor, so you can play around with the exposure and stuff like that—without losing as much detail.

For a regular JPEG file, your phone decides the colour balance, exposure, noise reduction, sharpening, and other elements of a photo. It uses compression techniques to minimise file size but you do lose a whole lot of detail. However, JPEGs can be useful, because you get photos that are instantly social media-ready, and in general, quality is sufficient for most users.

Unfortunately, Apple Pro Raw is not available yet here in Malaysia, but Apple has confirmed that it will arrive at some point in the future via OTA update.

LiDAR sensors (Light detection and ranging)

- LiDAR uses lasers that ping off objects and return to the source, measuring distance by timing the travel of the light.

- LiDAR scanners help with better Night Mode portraits and faster autofocusing.

For some comparison, ToF sensors, like we’ve seen on some Android devices, emit infrared light that allows the sensor to sense distance and mimic a camera’s shallow depth of field. This helps to create the bokeh effet. ToF sensors use a single pulse of infrared light to create 3D maps, while LiDAR, as we said, uses lasers which are able to detect a more complex environment.

This brings two main advantages to LiDAR: better range and better object “occlusion” (the appearance of virtual objects disappearing behind real objects). It’s also worth mentioning that LiDAR also greatly enhances augmented reality. LiDAR sensors are only available for the iPhone 12 Pro and Pro Max.

Dolby Vision HDR video recording

This is Dolby’s version of HDR (High Dynamic Range). The HDR standard is supposed to offer better colours and wider dynamic range in your videos, and there are a number of different standards. HDR10 is the most commonly used, as it is a royalty-free standard, while Dolby Vision HDR requires a license fee from manufacturers.

What this means is that even if your iPhone shoots and edits in Dolby Vision HDR, you’ll need a monitor/TV that actually supports the standard. On an incompatible display, your video might look even worse.

The good news is that all four models across the range have support for Dolby Vision HDR. However, the iPhone 12 models can record Dolby Vision in 4K 30fps, while the iPhone 12 Pro models can shoot the format at 4K 60fps. Shooting in this higher frame rate will give you more flexibility to slow down footage in post, or produce videos that give you very smooth motion that doesn’t have much motion-blur.

It’s worth noting that YouTube doesn’t official support Dolby Vision HDR, although Netflix does support both HDR10 and Dolby Vision. To enable HDR:

Step 1: Launch the Settings app on your iPhone 12

Step 2: Scroll down and tap on the Camera option

Step 3: Now, tap on Record Video.

Step 4: Toggle on HDR Video to enable Dolby Vision\

Dolby Vision HDR is available on all four iPhone 12 models.

Sensor-shift image stabilisation

Most smartphones come with Optical Image Stabilisation (OIS), where the lens is responsible for reducing handshake blur when shooting photos and videos.

However, the iPhone 12 Pro Max comes with a sensor-shift optical image stabilisation system, which is more similar to In-Body Image Stabilisation (IBIS) commonly found on mirrorless cameras. Rather than letting the lens do all the work, this system incorporates a floating sensor to counterbalance hand movements.

Apple says that this new tech can stabilise an image at over 5,000 times per second. You should also know that sensor-shift is only available for the wide lens (main cam), so you won’t be getting this on the ultra-wide and the telephoto cameras.

Apple doesn’t really explain the technical details of how this is better than OIS, but you can view the results in the embedded video above. Sensor-shift is only available on the iPhone 12 Pro Max.

The iPhone 12 Pro Max’s larger sensor

Other than sensor shift, Apple has also given the iPhone 12 Pro Max a 47% larger sensor in the wide camera, with bigger 1.7-micron pixels, compared to the iPhone 12 Pro.

So this basically means that you should be able to take better low light photos because larger sensors allow more light into the sensor and therefore, you will be able capture brighter images when it’s dark.

For a side-by-side comparison between the iPhone 12 Pro and Pro Max, watch the video above at 6:52.

So, that’s it for today’s episode of HTWI. If today’s video helped you understand your new iPhone’s camera capabilities a little bit better, we’d love to hear from you! Meanwhile, you can always chat with us over at RKMD, our Facebook group—or leave suggestions for future episodes of HTWI!